Executive Summary 🔗

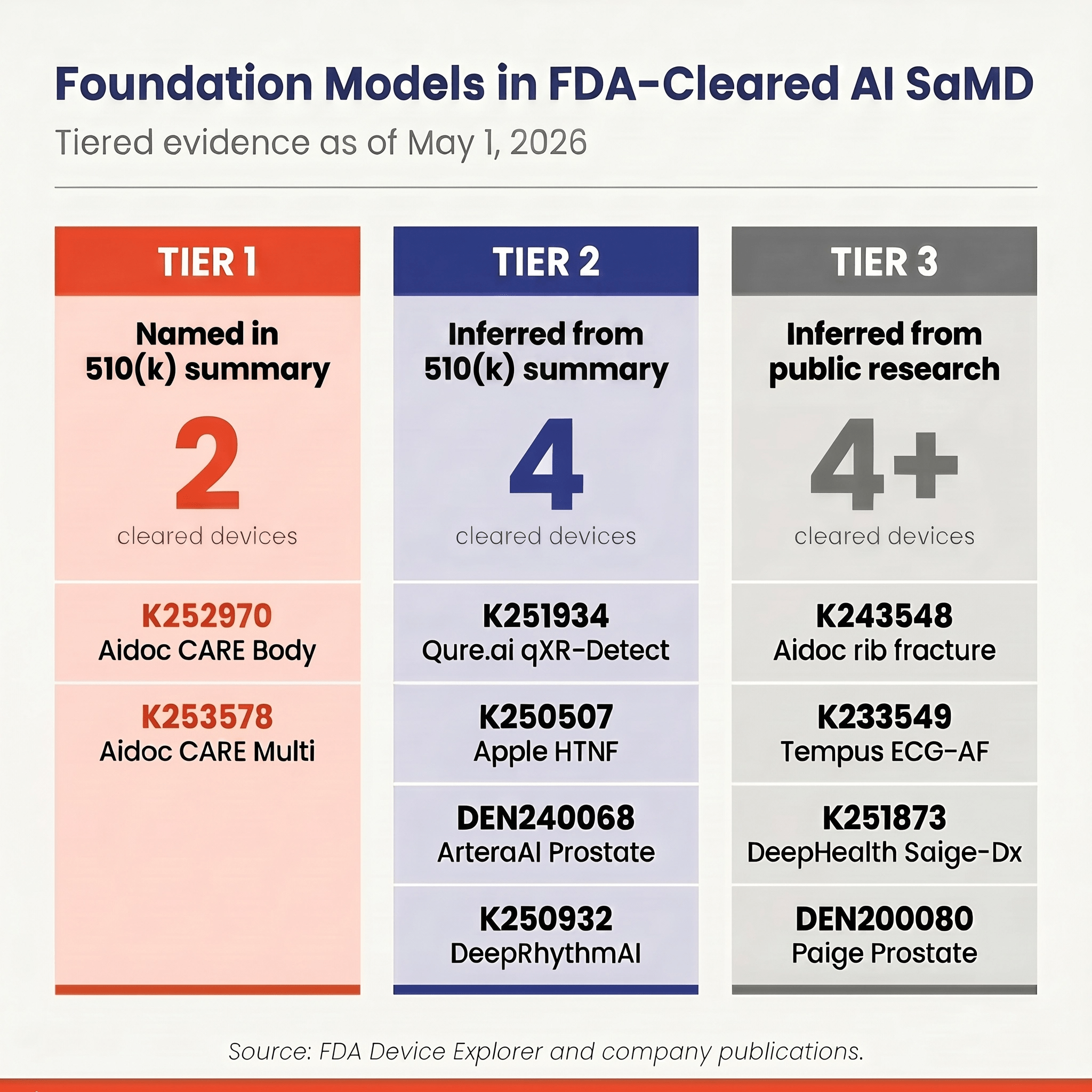

- 14 cleared devices identified as using or built on foundation model architectures, across three evidence tiers.

- Tier 1 — Explicit "foundation model" language (2): K252970, K253578. Both are Aidoc CARE multi-triage tools cleared in early 2026.

- Tier 2 — Explicit transformer or self-supervised architecture, without the phrase (4): K251934 (Qure.ai qXR-Detect), K250507 (Apple Hypertension), DEN240068 (ArteraAI Prostate), K250932 (Medicalgorithmics DeepRhythmAI).

- Tier 3 — Inferred from press releases, peer-reviewed research, or generalist capability (8): K243548 (Aidoc Rib Fractures), K233549 (Tempus ECG-AF), K251873 (DeepHealth Saige-Dx), DEN200080 (Paige Prostate), K252366 (a2z-Unified-Triage), K253818 (Annalise Enterprise), K211678 (Lunit INSIGHT MMG), K251151 (RapidAI).

- The architectural shift is broader than the FDA database suggests. Manufacturers describe novel architectures using established "deep learning" terminology to smooth the path to substantial equivalence.

The Arrival of Foundation Models in Medical Devices 🔗

Artificial intelligence in medical devices has historically relied on narrow, single-task algorithms trained on highly curated datasets. A model trained to detect a pneumothorax on a chest CT could not detect a rib fracture without being retrained from scratch. This paradigm shifted with the advent of foundation models. These large-scale models are pre-trained on vast amounts of unlabeled or loosely labeled data using self-supervised learning. They can then be fine-tuned for a wide variety of downstream tasks with significantly less labeled data and shorter development cycles.

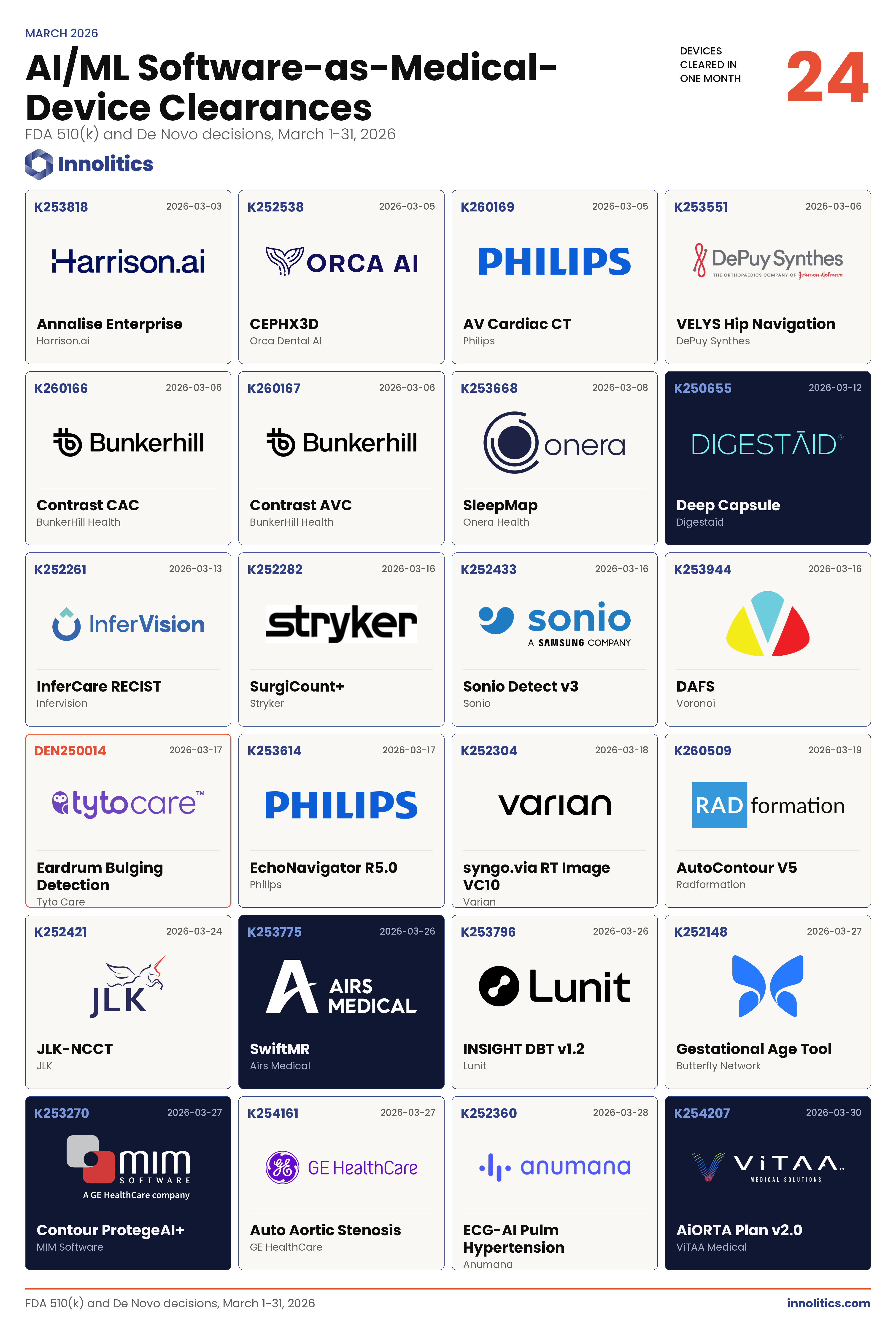

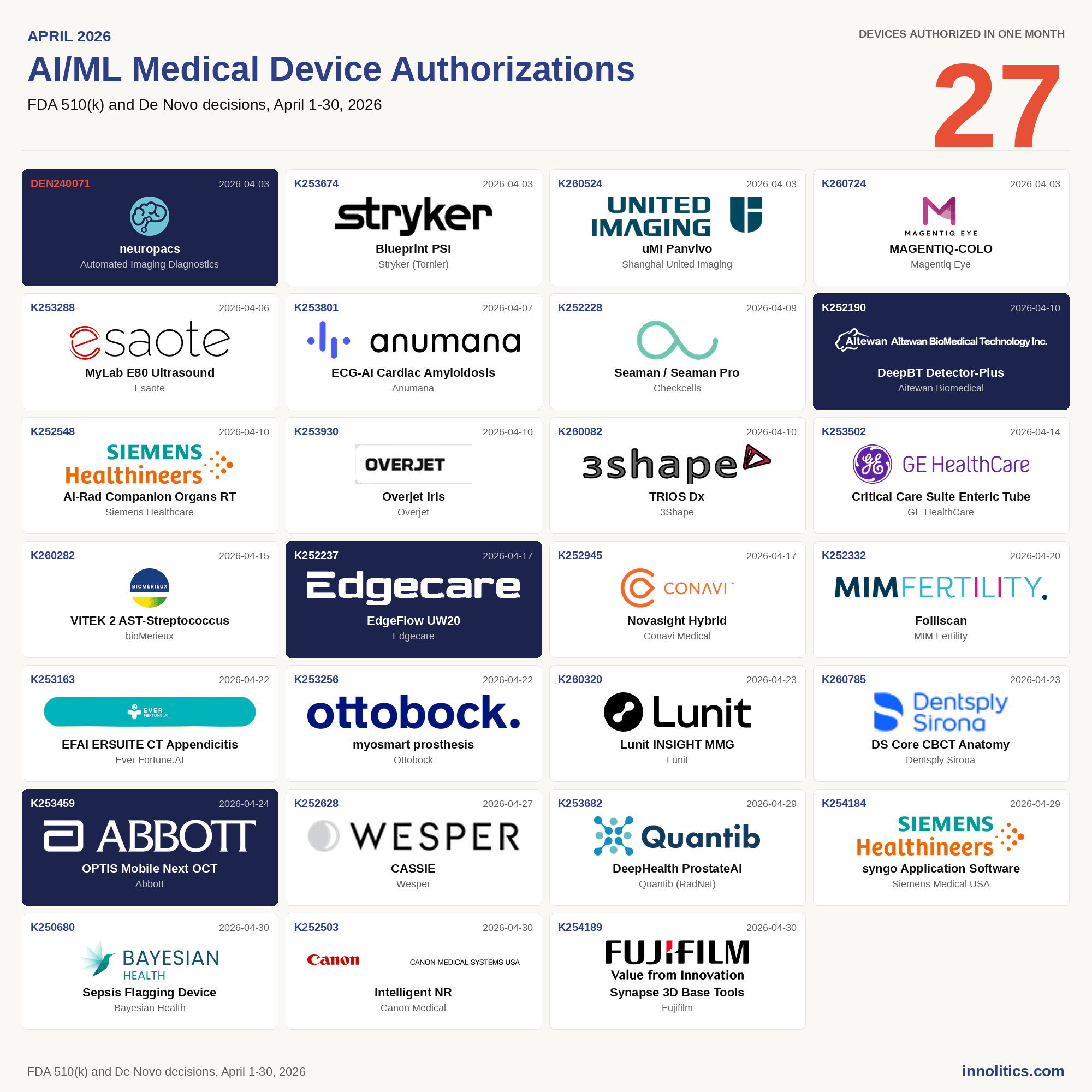

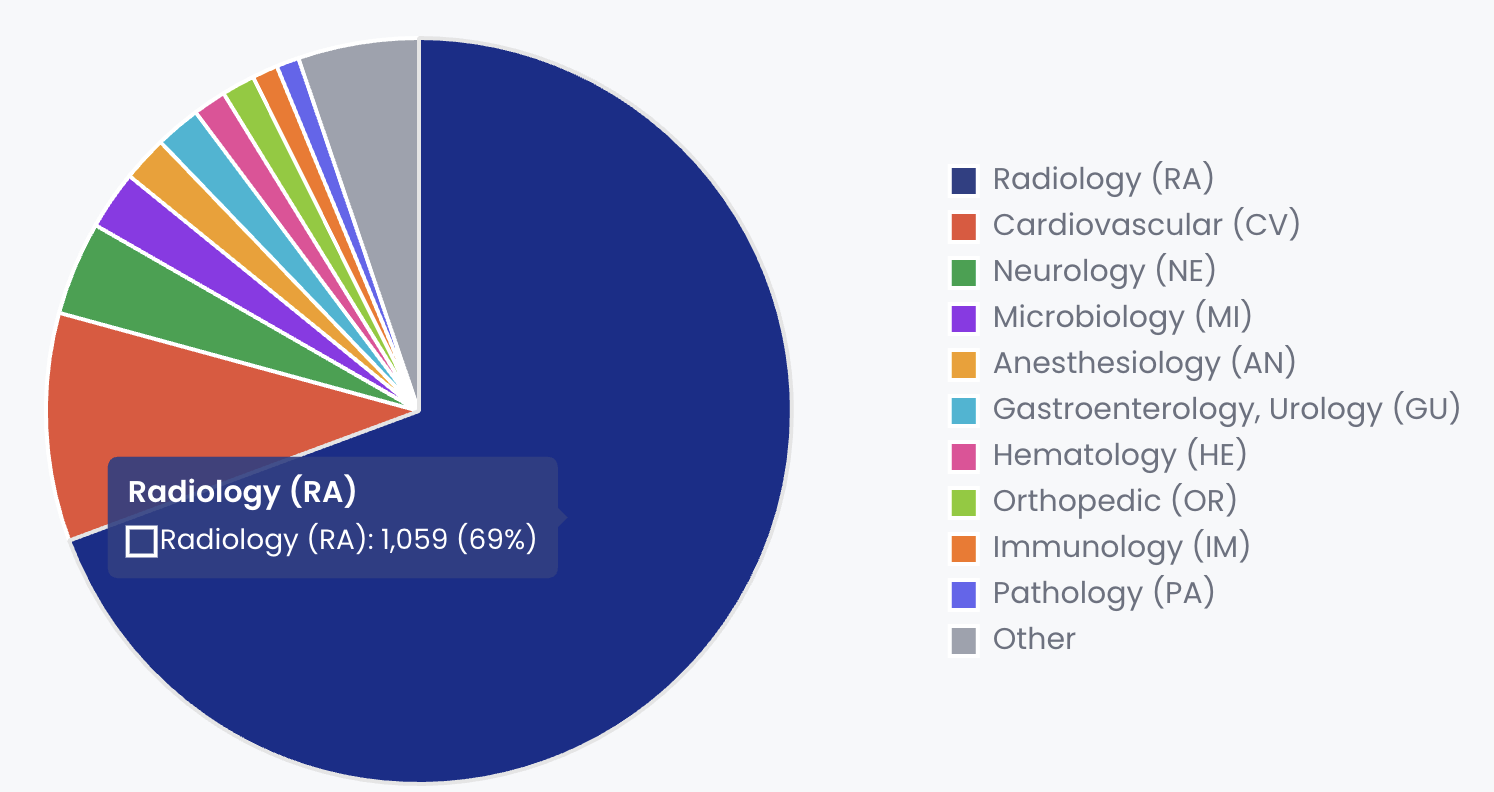

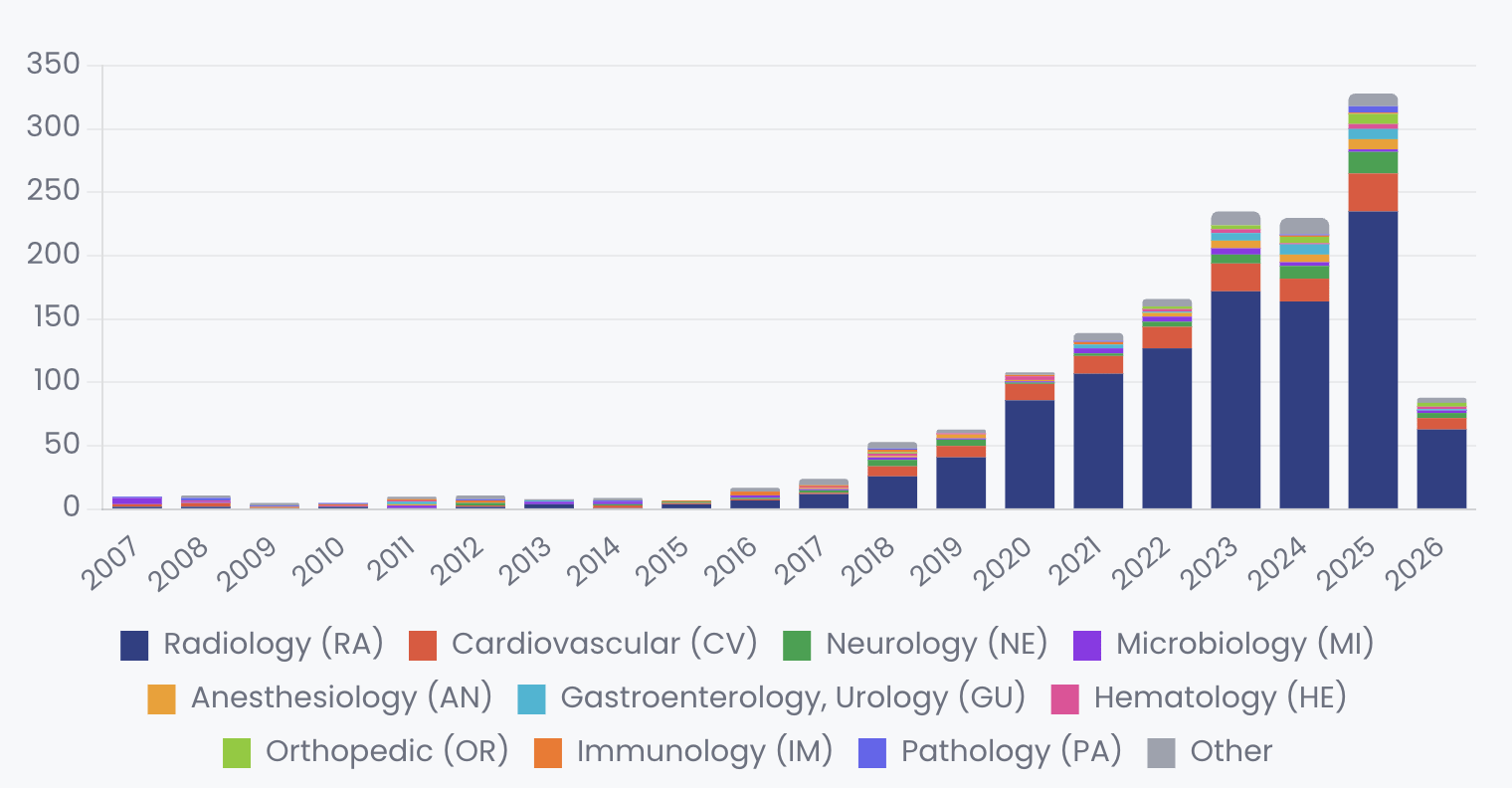

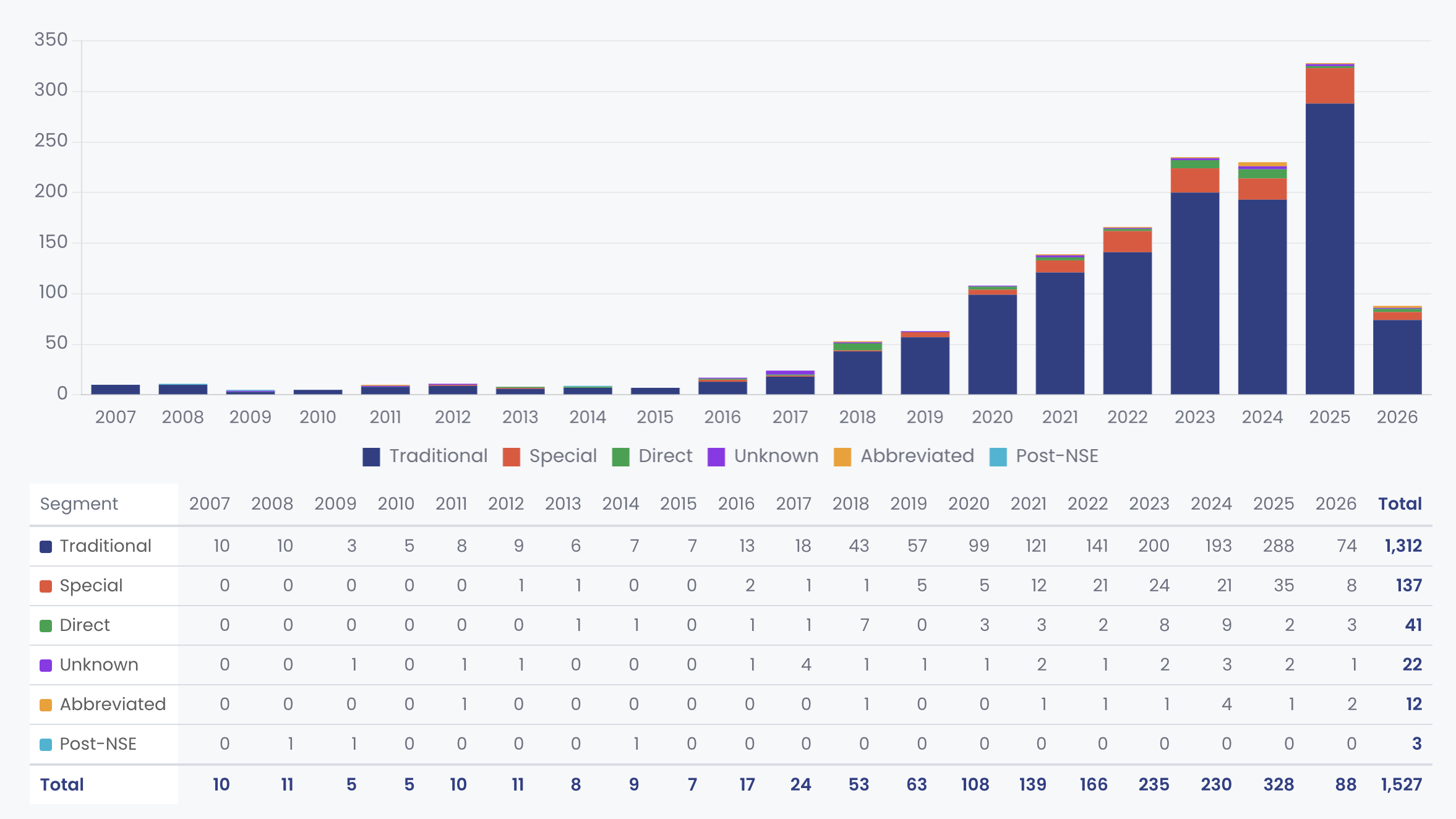

In the Software as a Medical Device (SaMD) space, radiology has been the proving ground for this technology. The FDA has cleared over 1,500 AI-enabled medical devices, with radiology accounting for roughly 69% of the total. The transition from narrow AI to foundation models in this sector is now visible in the FDA's 510(k) clearance data, though often obscured by regulatory language.

In December 2024, Aidoc received FDA clearance for a rib fracture triage tool (K243548), which the company later announced as the first clearance derived from its CARE1 Foundation Model 3. This was followed by additional clearances for aortic dissection and, in early 2026, multi-triage tools covering up to 11 distinct pathologies in a single workflow.

What the FDA Data Actually Shows 🔗

While marketing materials frequently highlight foundation models, the regulatory documentation tells a more nuanced story. A search reveals that the explicit term "foundation model" is rarely used in official 510(k) summaries.

As of May 1, 2026, only two 510(k) decision summaries explicitly mention the term "foundation model":

- K252970: Aidoc's BriefCase-Triage: CARE Multi-triage CT Body (Cleared January 7, 2026). The summary notes the device uses a "foundation model-based artificial intelligence (AI) system to analyze images and highlight cases with detected findings."

- K253578: Aidoc's BriefCase-Triage: CARE Multi-Triage CT for Pneumothorax; Pericardial effusion; Large aortic aneurysm; Shoulder fracture or dislocation (Cleared February 26, 2026). This summary contains identical language regarding the foundation model-based AI system.

Evidence Summary Table 🔗

The table below summarizes the evidence gathered across the three tiers, linking directly to the Innolitics FDA Device Explorer records and the supporting public research or press releases.

| Tier | Device & 510(k) | Clearance Date | Evidence Summary | Source Links |

|---|---|---|---|---|

| Tier 1 | Aidoc CARE Multi-triage CT Body (K252970) | Jan 7, 2026 | The 510(k) summary explicitly states the device uses a "foundation model-based artificial intelligence (AI) system." | FDA Record |

| Tier 1 | Aidoc CARE Multi-Triage CT (K253578) | Feb 26, 2026 | The 510(k) summary explicitly states the device uses a "foundation model-based artificial intelligence (AI) system." | FDA Record |

| Tier 2 | Qure.ai qXR-Detect (K251934) | Jan 16, 2026 | The 510(k) summary explicitly notes the replacement of a CNN encoder with a "Vision Transformer (ViT)-based encoder." | FDA Record |

| Tier 2 | Apple Hypertension Notification Feature (K250507) | Sep 11, 2025 | The 510(k) summary explicitly describes the use of a "self-supervised learning method" on PPG data. | FDA Record |

| Tier 2 | ArteraAI Prostate (DEN240068) | Jul 31, 2025 | The De Novo summary explicitly mentions the use of "self-supervised learning" for feature extraction from whole slide images. | FDA Record |

| Tier 2 | Medicalgorithmics DeepRhythmAI (K250932) | May 27, 2025 | The 510(k) summary explicitly lists the use of "CNN and transformer models" for arrhythmia detection. | FDA Record |

| Tier 3 | Aidoc BriefCase Rib Fractures (K243548) | Dec 11, 2024 | The 510(k) summary uses generic "deep learning" language, but Aidoc's press release announced this specific clearance as powered by their CARE1 Foundation Model. |

FDA Record Press Release |

| Tier 3 | Tempus ECG-AF (K233549) | Jun 20, 2024 | The 510(k) summary uses generic "machine learning" language, but Tempus has published peer-reviewed research detailing their transformer-based ECG models. |

FDA Record Research Paper |

| Tier 3 | DeepHealth Saige-Dx (K251873) | Aug 2025 | The 510(k) summary uses generic language, but DeepHealth publicly announced a partnership to build fine-tuned models on HOPPR's medical-grade foundation model. |

FDA Record Press Release |

| Tier 3 | Paige Prostate (DEN200080) | Sep 21, 2021 | The De Novo summary describes older multiple instance learning. Paige has since published the Virchow foundation model, which explicitly benchmarks against this older cleared device. |

FDA Record Virchow Paper |

| Tier 3 | a2z-Unified-Triage (K252366) | Nov 24, 2025 | The 510(k) summary uses generic "deep learning" language, but the company and its founders publicly describe it as a "generalist system" that scales across many diseases, and it flags seven distinct conditions simultaneously. |

FDA Record Press Release |

| Tier 3 | Annalise Enterprise (K253818) | Mar 3, 2026 | The 510(k) summary uses standard CADt language, but the parent company (Harrison.ai) has publicly launched the Harrison.rad.1 multimodal foundation model, and the product analyzes over 120 findings simultaneously. |

FDA Record Press Release |

| Tier 3 | Lunit INSIGHT MMG (K211678) | Nov 17, 2021 | The 510(k) summary uses generic language, but Lunit publicly markets a "future-ready foundation model strategy" and has published research on self-supervised vision transformers for breast imaging. |

FDA Record Company Strategy |

| Tier 3 | RapidAI Enterprise Suite (e.g., K251151) | Jul 16, 2025 | The 510(k) summaries use generic language, but RapidAI's press releases explicitly state their platform is "underpinned by advanced foundation models trained on multimodal clinical data." |

FDA Record Press Release |

Earlier clearances that industry press attributed to the CARE foundation model, such as the December 2024 clearance for rib fractures (K243548) and the May 2025 clearance for aortic dissection (K251406), describe the technology simply as a "deep learning algorithm" trained on labeled images. This discrepancy highlights a common regulatory strategy. Manufacturers often describe novel underlying architectures using established, familiar terminology to smooth the path to substantial equivalence.

We see this strategy frequently in our own work. When we architected Neosoma's fast lane to FDA clearance, we isolated their new convolutional neural network for FDA review, winning clearance in three months and building a framework for every product after 5. The goal is to present the FDA with a clear, bounded evaluation of safety and effectiveness, rather than a sprawling technical treatise on the underlying architecture.

Inferred Foundation Model Usage 🔗

Because 510(k) summaries often use generic language, a true accounting of foundation models in AI SaMD requires cross-referencing FDA data with peer-reviewed papers, engineering blogs, and corporate press releases. This deeper investigation reveals that the architectural shift is broader than the FDA database suggests.

Explicit Architectural Disclosures 🔗

While they avoid the phrase "foundation model," several recent clearances explicitly disclose the use of the underlying architectures that power them:

- Qure.ai qXR-Detect (K251934): Cleared in January 2026, this chest X-ray triage tool explicitly states in its 510(k) summary that the company replaced its existing Convolutional Neural Network (CNN) encoder with a "Vision Transformer (ViT)-based encoder."

- Apple Hypertension Notification Feature (K250507): Cleared in September 2025, this over-the-counter mobile medical application analyzes photoplethysmography (PPG) data. The FDA summary explicitly notes the deep-learning model was developed using a "self-supervised learning method" on a massive dataset of over 86,000 participants.

- ArteraAI Prostate (DEN240068): This De Novo clearance from July 2025 performs an algorithmic assessment of features extracted from whole slide images using "self-supervised learning."

- Medicalgorithmics DeepRhythmAI (K250932): Cleared in May 2025, this ECG analysis software explicitly lists the use of "CNN and transformer models" in its FDA summary.

Inferred Usage from Public Research 🔗

In other cases, the linkage between a cleared product and a foundation model must be inferred from the company's public research footprint.

For example, Paige AI has published extensively on Virchow, a massive 632 million parameter vision transformer trained via self-supervised learning on over 1.5 million whole slide images 6. While Virchow is a state-of-the-art foundation model, Paige's currently cleared product, Paige Prostate (DEN200080), predates Virchow and relies on an older multiple instance learning architecture. The Virchow paper explicitly benchmarks the new foundation model against the older, FDA-cleared Paige Prostate model 6. This illustrates a common industry pattern. Companies are building massive foundation models in their research divisions, but their commercial, FDA-cleared products often still run on earlier, narrower architectures. We are likely to see these foundation models be cleared through a traditional or special 510(k) or a PCCP if the manufacturer has it.

Similarly, companies like PathAI and DeepHealth have announced major foundation model initiatives. PathAI developed the PLUTO foundation model, but their recent FDA clearance for AISight Dx (K243391) is for a digital pathology viewer, not an AI diagnostic algorithm. DeepHealth announced a partnership with HOPPR to build fine-tuned models using HOPPR's medical-grade foundation model, but DeepHealth's current Saige-Dx mammography clearances (K220105, K251873) are described simply as deep learning algorithms 7.

Tempus AI provides another example. The company has published research on transformer-based ECG models, but the 510(k) summary for their Tempus ECG-AF device (K233549) describes it only as a "machine learning-based software." The connection to foundation model architectures requires reading between the lines of their technical publications.

A similar pattern appears with a2z Radiology AI. In November 2025, the company received clearance for a2z-Unified-Triage (K252366), a single device that flags seven urgent findings on abdomen-pelvis CT scans simultaneously. While the FDA summary describes the technology simply as "deep learning algorithms," the company's founders publicly describe it as a "generalist system that could scale across conditions," and the company's tagline is "One AI. Many Diseases." This multi-task capability from a single model is a hallmark of foundation model architecture, even if the regulatory filing relies on traditional CADt terminology.

A Broader Industry Pattern 🔗

The a2z example is not an isolated case. A systematic sweep of recent multi-task and generalist AI clearances reveals a widespread industry pattern: companies are deploying foundation models in production while maintaining conservative, traditional language in their FDA submissions. This is likely intentional to downplay the technological characteristic differences to FDA and to give as little information to their future competitors as possible.

For instance, Harrison.ai (parent company of Annalise.ai) publicly launched the Harrison.rad.1 multimodal foundation model, describing it as a specialized Large Language Model (LLM) for radiology 10. Their Annalise Enterprise CXR product can detect over 120 distinct findings on a chest X-ray. Yet, their most recent FDA clearance (K253818, March 2026) makes no mention of foundation models or transformers, relying instead on standard Computer-Aided Triage (CADt) terminology.

Similarly, RapidAI's press releases explicitly state that their stroke and emergency triage platform is "underpinned by advanced foundation models trained on multimodal clinical data" 11. However, their numerous 2025 FDA clearances (such as K251151 for Rapid CTA 360) describe the technology using generic machine learning terms. Lunit follows the same playbook, publicly marketing a "future-ready foundation model strategy" and publishing research on self-supervised vision transformers 12, while their FDA-cleared INSIGHT products (K211678, K231470) are described simply as deep learning algorithms.

This discrepancy is a calculated regulatory strategy. By describing novel underlying architectures using established, familiar terminology, manufacturers can smooth the path to substantial equivalence and avoid unnecessary regulatory friction.

The Path Forward 🔗

The transition to foundation models in AI SaMD is well underway, driven by the promise of faster development cycles and broader clinical utility. Companies like HOPPR are even offering their foundation models as a platform for other medtech companies to build their own fine-tuned tools, further accelerating adoption 1.

However, the regulatory bar remains high. The reliance on the 510(k) pathway means that new foundation model-based devices must still demonstrate substantial equivalence to existing, often narrower, predicate devices. The clinical evidence must support the specific indications for use, regardless of how powerful the underlying model might be.

For companies developing these tools, the challenge is twofold. They must harness the technical power of foundation models while building a regulatory strategy that satisfies the FDA's demands for safety, effectiveness, and generalizability.

The FDA's planned tagging of foundation models and the increasing use of PCCPs will provide greater visibility into this shift in the coming years. For now, the May 1, 2026 snapshot shows an industry in transition, where the technical reality of foundation models is just beginning to surface in the official regulatory record.

Need Help with Your Foundation Model Submission? 🔗

Innolitics is an engineering-first consultancy specializing in AI/ML SaMD regulation. We know how to frame a foundation model architecture for substantial equivalence, design test sets that hold up to FDA's scrutiny, and use a PCCP to avoid re-clearing every fine-tune.

We go deeper than strategy. Our team can train the models, implement V&V, write all the documentation, and sit across the table from FDA reviewers when the hold letter comes back. We have shipped clearances in as little as three months when the architecture is scoped right, and we offer speed and certainty guarantees on our engagements.

If you are building (or already built) a foundation model and need to get to commercialization before your competitors do, you need to hire a firm that can add indications as your foundation model can and do so with a speed and certainty guarantee.